Bone Conduction: The Missing Link?

Anyone who hears themselves speaking on an audio recording experiences that their voice sounds different to others than they perceive it themselves. This is mainly because we perceive our own voices with something others cannot hear: sound transmitted through our bones.

The Basics

Bone-conducted sound is structure-borne sound, i.e., the sound propagating in matter that is in a solid aggregate state. In bone conduction, this solid body is, unsurprisingly, bone. What other people perceive from our voice is airborne sound, i.e., the sound that propagates in the air - a fluid.

Thus, bone conduction refers to the structure-borne sound portion of our voice traveling from the vocal folds, through our bone structure, directly to the inner ear – as opposed to traveling through the air, outer ear, eardrum, and middle ear to the cochlea.

A fundamental difference between the two types of sound is that only longitudinal waves (a physical wave that oscillates in the direction of propagation) can propagate in a fluid such as air. In contrast, in a solid body, transverse waves (physical waves in which the oscillation is perpendicular to their direction of propagation) can also propagate. When so-called couplings of both types of waves occur in thin components such as plates or beams, bending waves can result, which can cause bending deformations.

Thanks to their high sound energy, the bending waves produce airborne sound - think of electrostatic or planar-magnetic loudspeaker membranes, a bell (plate), or a triangle (beam). Singers and choir conductors also use cone sound transmission with a tuning fork on the upper jawbone or temple to ensure precise pitch for the beginning of a piece - and only in this way can they hear the quiet sound of the tuning fork clearly, even in noisy environments.

The speed of sound in structure-borne sound strongly depends on the solid's properties. These include, above all, the density, the sonic rigidity, the transverse contraction coefficient, the elastic modulus (longitudinal waves), and the shear modulus (transverse waves).

Only half-heartedly implemented?

Ordinary headsets do not take bone conduction into account and only perceive the talkers' airborne sound via the microphone when speaking hands-free - and unfortunately, any sound that exists around them: background noise such as street noise, wind and rain, and, above all, other talkers. Especially in noise-sensitive environments, the bone-sound transmission offers a lot of potential to improve the quality of hands-free listening in in-ear headsets. Manufacturers exploit this potential with special, usually piezoelectric microphones (bone conduction sensors) - but they squander a lot of it because they either have not taken bone conduction into account in testing so far and therefore do not optimize their products accordingly or because they conduct non-standardized tests with results that may not be very reliable.

Holistic testing

This is where HEAD acoustics comes in: We enable fully comprehensive testing of headsets with bone conduction sensors. For this purpose, we use our artificial head HMS II.3 LN HEC in the ViBRIDGE version and excite the parts of our artificial ears HEL/HER 4.4 that correspond to the bones of the human head, with a vibration generator (actuator), just like a human's voice would do. This probably doesn't sound overly complicated at first. But if you genuinely want to do it right - and that is always our standard - it is a considerable challenge to generate such a realistic bone conduction signal and transmit it to the bone conduction sensors of in-ear headsets via suitable components of the artificial ear.

How much bone conduction do you want?

The main problem was that, until recently, no one knew which frequency components of the voice arrive at the inner ear - where the structure-borne sound transducers are located - and at what volume. To get to the bottom of this question, we performed a whole series of measurements with human talkers and used in-ear headset replicas with bone conduction sensors to measure how bone conduction "sounds." From the results of these extensive tests, we could deduce that the transmission of structure-borne sound does not differ significantly between women and men. However, male voices are better suited for our purposes since women have a higher fundamental voice frequency. Thus the transmission of structure-borne sound is not measurable in the low-frequency transmission range.

The measurements allowed us to get a good overview of the individual differences between the talkers. From the results of this scatter analysis, we derived mean transfer functions that serve as the basis for a more realistic simulation of structure-borne sound in customized lab tests. In other words, we recreate a structure-borne sound signal that corresponds for the first time to the results of the measurements from our tests. We generate this with the help of the actuator mentioned above in the artificial ear of the HMS II.3 LN-HEC artificial head parallel with the voice signal component transmitted through the air, generated by our fullband-capable artificial mouth with a two-way loudspeaker.

We ensure that the signals we generate are within the range of the structure-borne sound transmission in humans. Comparing the measurement results from the tests with human talkers and the simulations with ViBRIDGE shows a clear correlation: Our simulation results match the actual human results. This means: With HEAD acoustics ViBRIDGE, headset manufacturers can test their headsets with structure-borne sound sensors holistically, correctly, and reliably by simulating bone conduction in the laboratory.

Why headset manufacturers need to consider bone conduction

Better separation of the voice from noise or competing talkers provides the perfect basis for optimal noise cancellation and fundamentally improves speech quality in noisy environments. But that's not all: Sophisticated technologies such as echo cancellation and other signal processing in headsets also benefit from perfect voice detection - the transmission of the voice's bone-sound component results in a speech signal that has already been ideally denoised in advance.

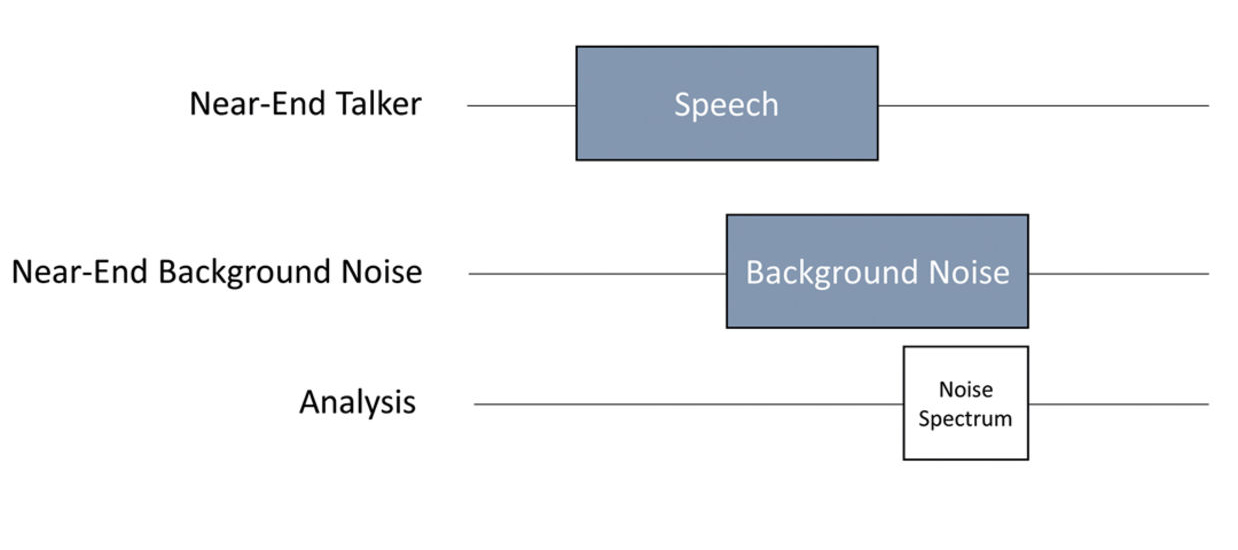

Here we show an example of the possibility of suppressing noise better with the help of structure-borne sound signals. Imagine you move from a quiet room into a canteen during a telephone call. The background noise situation changes abruptly. We can easily simulate this change in the lab by introducing the corresponding background noise via a background noise simulation system such as 3PASS lab or 3PASS flex after the start of speech playback via the artificial head's mouth (Fig. 1).

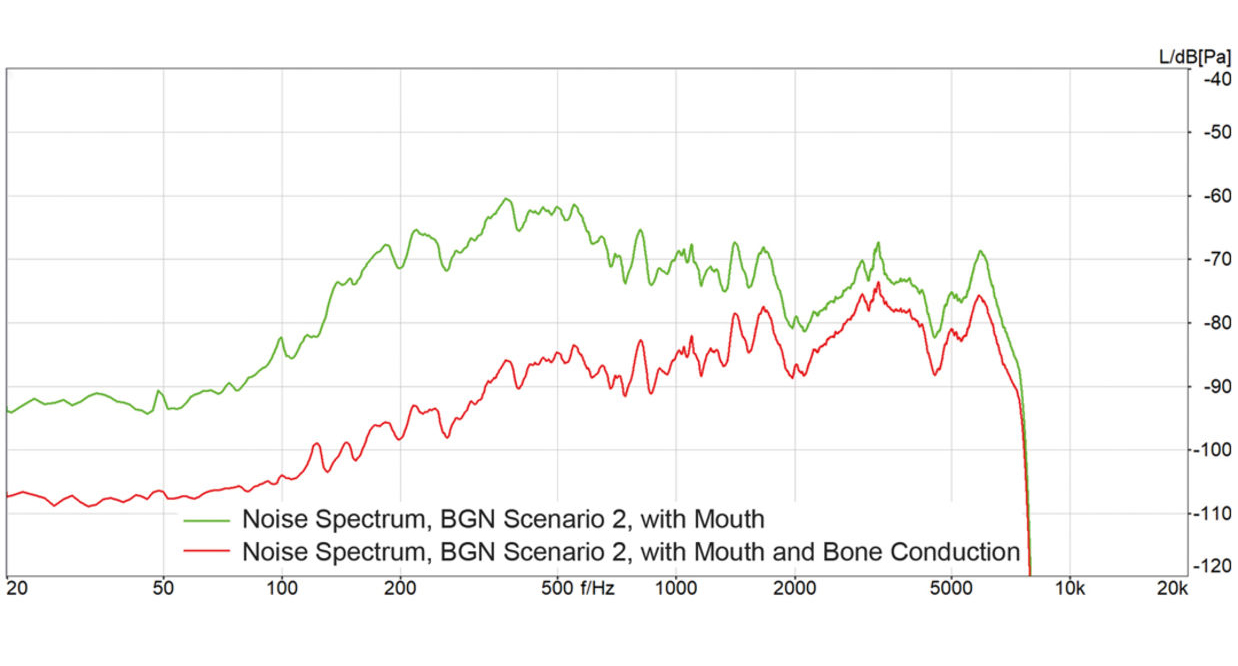

The noise canceller of the headset microphone suddenly has to cope with a completely different background noise situation and should still suppress the background noise as effectively as possible while maintaining the speech signal quality as well as possible. This is precisely where the bone conduction sensor helps enormously: The comparison of the bone conduction signal with the airborne sound captured by the microphone separates the user's speech from the background noise and thus improves the adaptation of the noise suppression. During each slight pause in speech, the noise canceller can "estimate" the background noise and generate the "anti-sound" (a sound excitation in phase opposition to the noise) more precisely. The result is a measurably faster and improved adaptation to the background noise: Figure 2 shows the measured power density spectrum of the background noise for a headset with and without simulation of structure-borne sound by the artificial head. It can be seen that a reduction of the background noise by between 8 and 28 dB is possible when simulating the structure-borne sound excitation over the entire frequency range. This considerable improvement is only possible by using the structure-borne sound signal.

What do you need?

As you can see, structure-borne sound simulation is crucial when testing the relevant headsets. Only with a standardized, complementary simulation of the airborne and structure-borne sound components generated by the human voice is it possible to evaluate and optimize headsets in various conversational situations in an objectively reproducible and holistic manner. HEAD acoustics offers a complete all-in-one solution for testing, optimizing, and validating appropriate headsets.

For the simulations, in addition to the artificial head HMS II.3 ViBRIDGE and the artificial ears HEL/HER 4.4 ViBRIDGE, we use our software for automated background noise simulation, 3PASS lab in interaction with labCORE, the modular hardware platform for speech quality and audio quality tests, and the measurement and analysis software ACQUA to control the entire test procedure.